Interactive Installation

SENSPACE

Where collective calm becomes light

Immersive

Intelligent Installation Experience

Scroll to view the video

The Concept

Senspace is an interactive audio-visual installation that transforms a room into a living biofeedback environment. Using computer vision, generative sound, and projection mapping, the space reads the collective movement of everyone inside and responds in real time — rewarding stillness with visual and sonic calm.

The installation is grounded in four theoretical frameworks: Attention Restoration Theory, Processing Fluency, Predictive Coding, and Perceived Control — each shaping how the environment guides participants from restlessness toward collective stillness.

On invisibility

“The moment a

person

notices the

technology,

their attention

shifts

from the

experience

to the

interface,

and the

restorative quality

collapses.”

02 / Process

The Biofeedback Loop

A ceiling-mounted camera reads your body. No sensors. No contact. The space mirrors your state back to you.

Sense

Computer vision detects breathing patterns through shoulder movement and body stillness through pose estimation, all from a single camera.

Interpret

A chaos score from 0.0 to 1.0 is calculated in real time. If anyone in the room moves, the whole space feels it. Calm is collective.

Reflect

Fluid projections shift through four visual states. Five generative audio layers activate as stillness deepens. The environment becomes your mirror.

Transform

Without instruction, people slow down. The feedback loop guides behavior invisibly. Calm is not given. It is discovered together.

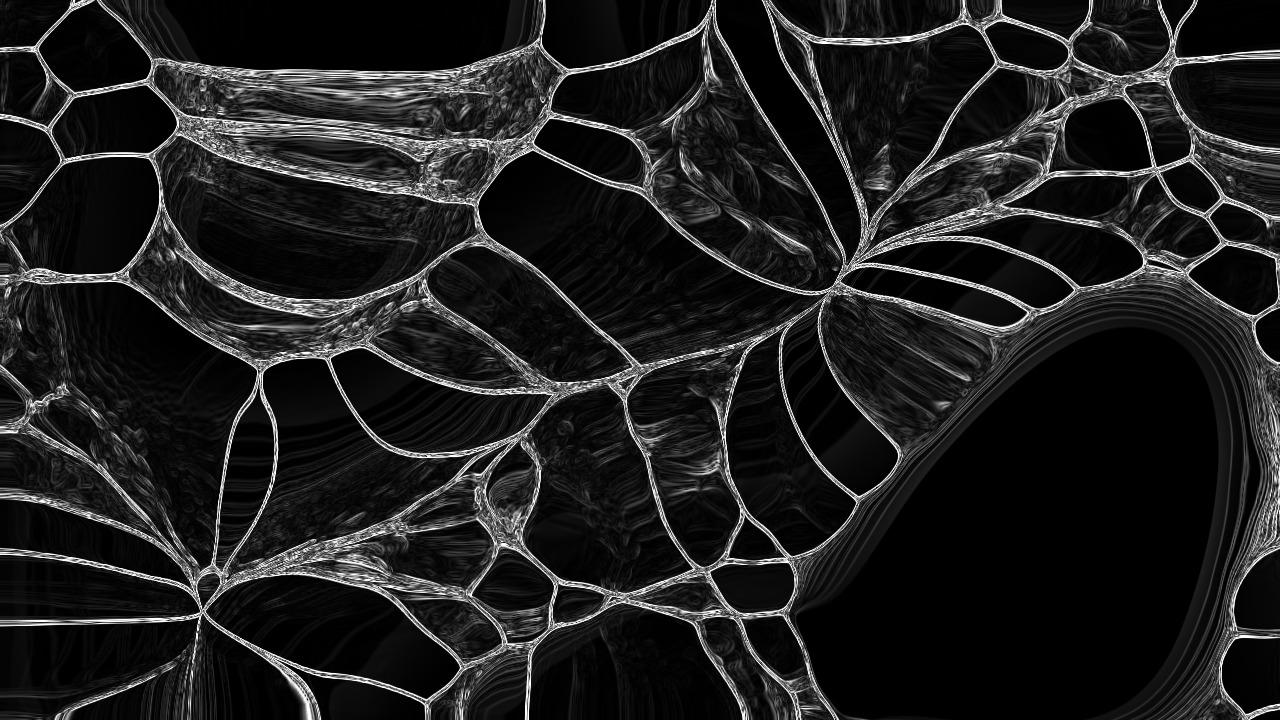

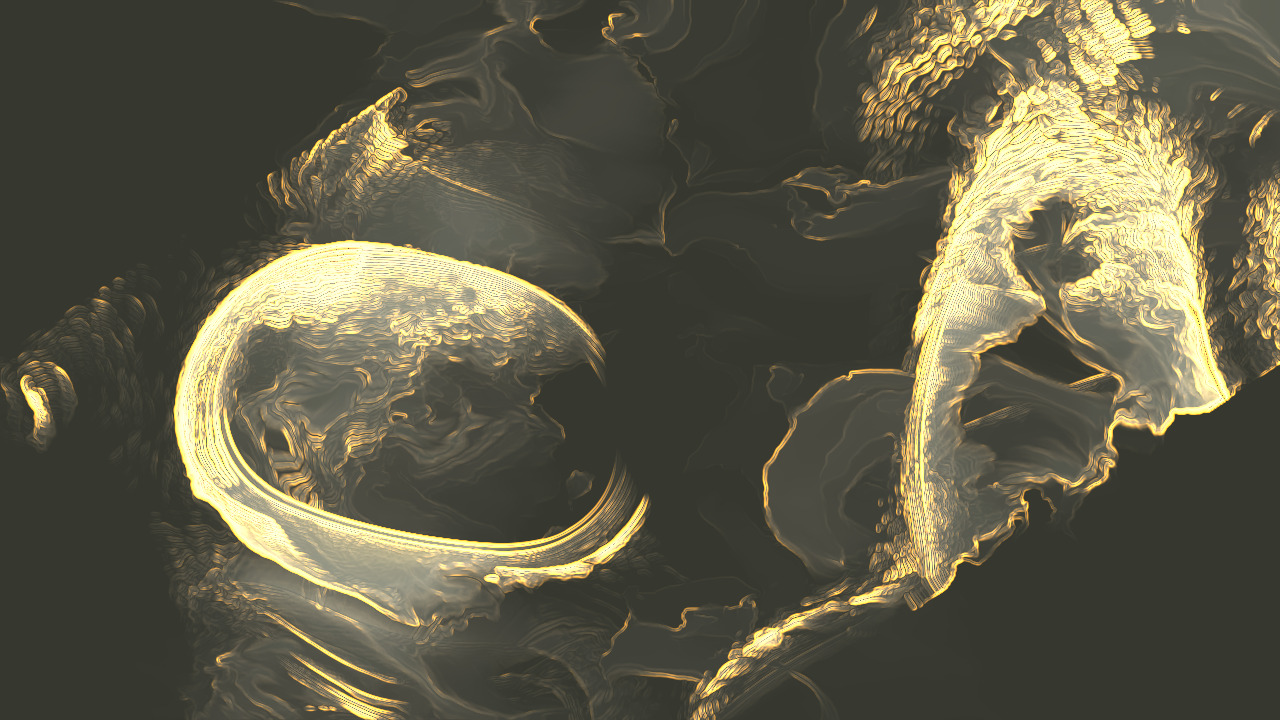

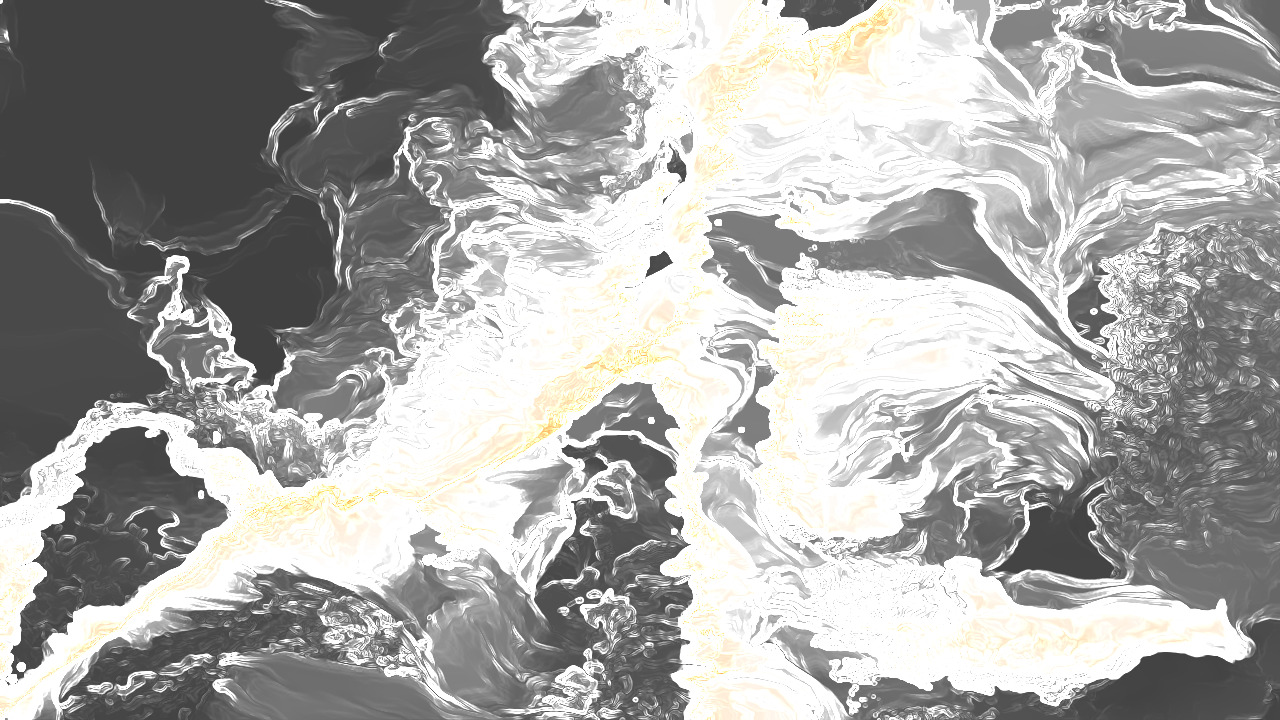

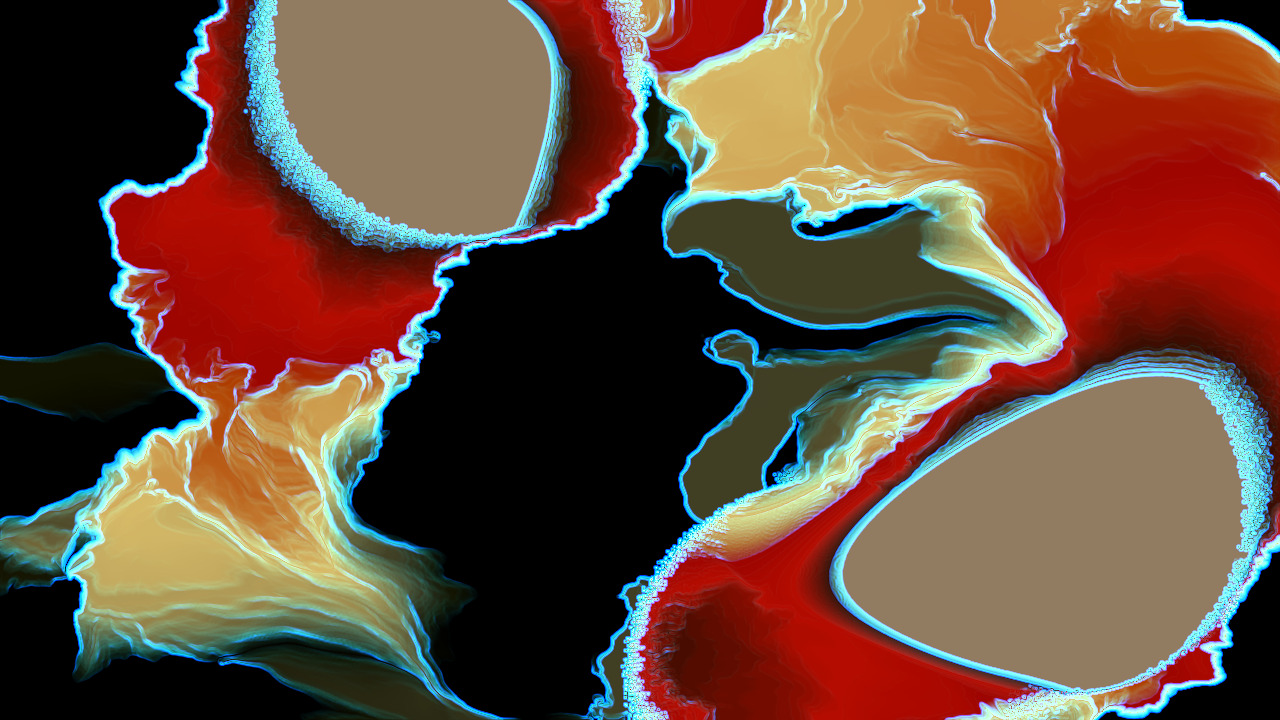

03 / Visual States

Four States of Being

The fluid simulation moves through four states inspired by stellar lifecycles, each driven by the collective chaos score.

- Chaos: 0.0 -

The Void

Near-total darkness. Faint drifting particles. Deep collective stillness achieved.

- Chaos: 0.2 – 0.4 -

The Flow

Luminous, slow-moving currents in warm tones. Gentle presence in the space.

- Chaos: 0.4 – 0.8 -

The Turbulence

Dense, fast-moving purple and white particle fields responding to active movement.

- Chaos: 0.8 – 1.0 -

The Dissolution

Scattered, disintegrating forms. A space overwhelmed by collective restlessness.

04 / Gallery

Inside the Installation

A glimpse into the immersive environment — projection, sound, and stillness converging in a single room.

05 / Technology

Under the Surface

End-to-End Latency

From camera capture to projection response

Computer Vision Pipeline

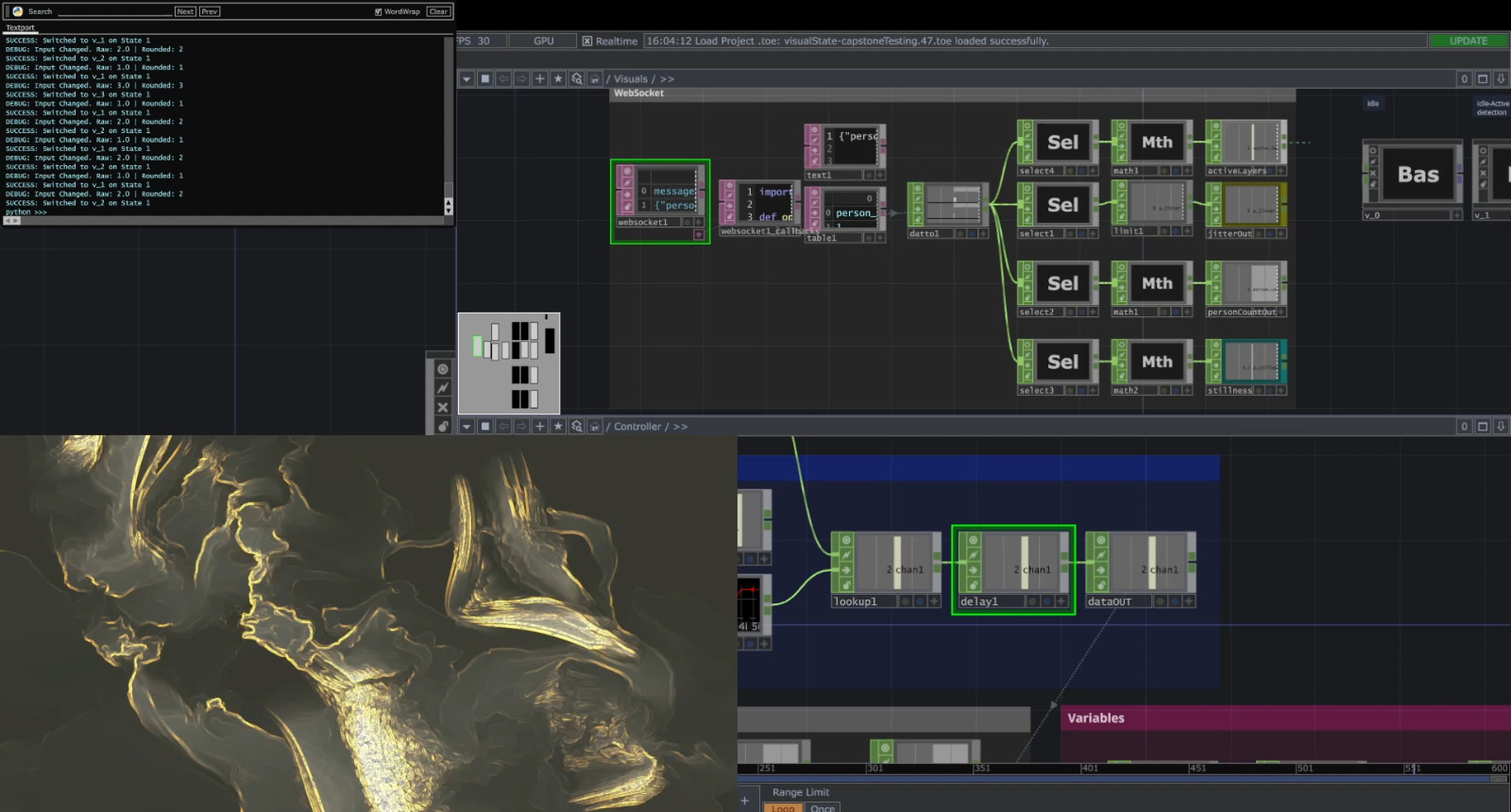

YOLO11n-pose detects 17 keypoints per person at 30fps. ByteTrack maintains identity across frames. No wearables required.

5-Layer Audio Engine

Generative audio layers activate as collective stillness deepens. Each layer responds to the chaos score threshold.

06 / Team

Built By

Senspace is a Capstone Project at NYU, Spring 2026, advised by Prof. Nimrah Syed.

Yaakulya Sabbani

_01

Ahmad Dahlan

_02Yaakulya Sabbani

Computer Vision, System Architecture

Architected full biofeedback pipeline: pose estimation, breathing detection, stillness scoring, the Dynamic Orchestra, and WebSocket networking.

Ahmad Dahlan

Visual Identity, Projection Mapping

Created a four-state fluid simulation in TouchDesigner, translating chaos scores into the projected visual language of the installation.